The New Age of Performance Anxiety

With the rise of screen culture, all the world has stage fright.

The beta-blocker propranolol has been a mainstay of American medicine since the 1960s, when it was regularly prescribed as a first-line defense against hypertension, arrhythmia, and other cardiovascular problems. Recent years, though, have seen a boom in the medication’s prescription rates—in part because as the drug regulates the heart, it also settles the nerves. Off-label, propranolol is used to calm the assorted storms of stage fright: the sweaty palms, the belly churn, the racing heart. Even professional performers have publicly alluded to using it. Robert Downey Jr., accepting an award at the 2024 Golden Globes, said casually, “I took a beta-blocker, so this is going to be a breeze.” Last fall, a People magazine headline asked, “Why Is Everyone Suddenly Taking the Decades-Old Chill Pill Propranolol?”

One answer is that stage fright, in the world shaped by the internet, is no longer limited to the stage. Smartphones and the social-media platforms installed on them—portable movie studios in miniature—have transformed their users into life’s cinematographers, directors, producers, and distributors. Those capabilities are at this point familiar. But in ways that are steadily becoming more clear, they are also transforming relationships—adding new uncertainty to what were once mundane interactions, tweaking people’s nervous systems, unsteadying their very sense of self.

This is one more way in which the technology that was meant to connect us has imposed distance. The personal-broadcasting capabilities of social media have, in many cases, not so much enhanced connection as erected a sort of scrim. Online, people are not just humans interacting directly with other humans. They are also actors interacting with an audience—one populated by potential critics.

This dynamic has eroded the old distinctions between the performing of life and the living of it. Whether you are cooking or cleaning or hiking or deciding the outfit you’ll wear to face the day, the culture of proliferating handheld cameras has made it possible for you to be, at all times, two things at once: human and object; three-dimensional body and two-dimensional image; who and what. “There was of course no way of knowing whether you were being watched at any given moment,” George Orwell wrote in 1984, of the “telescreens” that operate, in the novel’s dystopia, as portals to continuous entertainment—and surveillance. Our versions of those screens are wielded not by Big Brother but by Big Everyone.

Sometimes, this can lead to immense good: People acting as ad hoc documentarians have exposed injustices that might otherwise have gone unrecorded. But our phones’ capabilities have also led to less straightforwardly just realignments of power. They have brought new vulnerabilities and, for many people, new apprehension to the simple act of existing in public, where anyone is at risk of being ensnared in another person’s lens. You might be taken out of context. You might be cast as the star—or as an extra, or as scenery—in someone else’s show. You might be edited, turned into a hero or a villain or a joke. No medication can cure the broader societal ailment: Mass self-consciousness is ascendant. Performance anxiety is becoming a way of life.

A few years ago, the clothing chain H&M launched an ad campaign promising that its fast-casual clothes would allow the people who wore them to become “the main character of each day.” With its use of main character, the campaign recognized the rise of a quintessentially modern class of language: Words once reserved for describing dramas on the stage or screen have been repurposed to describe consumers’ ordinary realities.

People now narrate their personal “character arcs” and bemoan those who have “lost the plot” or been “canceled.” The language, in one way, is a continuation of Americans’ enduring fascination with those who are “larger than life” and with events that seem cinematic. But the new vernacular makes those old ideas literal. They are scripts fit for a show that never ends.

What H&M’s marketing gurus had likely not accounted for, however, is that main character, nearly as soon as it was identified as an aspiration, would come to describe something less aspirational—and less desirable. On the site formerly known as Twitter, “main characters” went from being stars and heroes to subjects of mass mockery. “Each day on twitter there is one main character,” the poster @maplecocaine observed in 2019. “The goal is to never be it.”

Language, especially the version of it that arises on the internet, can have forensic value. The terms that catch on typically capture essential truths and beliefs. Main-character energy is, in that sense, a turn of phrase that hints at a widespread condition: the ever-more-inescapable demands of performance.

The stage has traditionally been a place of exceptionalism—a platform where real life stops, where disbelief is suspended. Theaters emphasize the divide between the actors under the lights and the audience in the darkness. When movie houses became a feature of American life, in the early 20th century, they replicated that division. Their wall-high screens and rows of seats enforced the sharp divide between fantasy and reality—and imposed, on the audience, a state of passivity. People came to watch, to be entertained, and then to file out onto the street.

But these divisions have become far less tidy now that small, interactive screens have been thrust into so many people’s hands. Here, the old grammars and demarcations do not always apply. Are people the audience or are they the content being consumed? They are both.

This new state of affairs is transforming society bit by bit and pixel by pixel. Human brains are programmed for in-person interaction, adept at both assessing other people and reading the room. They are less equipped to understand the new performativity and artificiality created by the cold distance of the screen.

Our language has evolved to acknowledge this too. Consider the ubiquity of the word performative—which, in its modern use, has come to serve as a description and an all-purpose insult. Someone might express a political opinion, and another person might dismiss it: “performative.” Someone might express a kindness, a conviction, a joke: “performative.” Invoking performativity, people now blithely reject other people’s actions—and, by extension, other people’s claims about their own lives—as fakery made in the service of the show.

As a matter of pure rhetoric, performative is extremely effective. It is unfalsifiable: Sincerity is a difficult thing to prove. In every other way, though, performative is pernicious. Invoked as a default reaction to other people’s behavior, it can breed widespread mistrust—a cynicism that extends far beyond individual interactions. This is especially ironic given that performativity was originally defined, decades ago, as something that builds community rather than prevents it.

In a series of books published in the mid-20th century, the sociologist Erving Goffman analyzed everyday social interactions as performances in miniature, identifying the scripts—subtle, but always there—that seemed to govern the exchanges. Goffman studied etiquette and culture and made their quiet workings explicit. He observed that, using stage directions such as small talk and body language, people essentially read one another’s behaviors like texts and tried to respond in ways that would make sense to their scene partners. For Goffman, performativity—“presentation of the self,” he called it—was an element of an ensemble show. And in the give-and-take of performance, everyone played an important role, helping define and reinforce the community.

But today, performative is commonly summoned as an accusation—of attention seeking, of insincerity, of main-character energy gone wrong. That usage stifles community rather than fosters it. It is a new insult lent power by a long-standing trend: Americans, in general, tend to hold extremely ambivalent ideas about fame. They revere fame but also distrust it. They value it as a commodity but resent it as a goal.

The puritanism that prowls the back caverns of the culture tends to snap to attention when it detects a whiff of vanity. And the endless stage born of screen culture has made that tension ever more punishing. People are compelled to be actors, always maintaining a performance. They are also expected to be wary as audiences, and to dismiss other people’s performances—as clickbait, fame mongering, or bids for clout. Authentic enough has now joined rich enough and thin enough as stubbornly elusive aspirations.

Performative, as a dismissal, can’t help but breed performance anxiety. When people are conditioned to see other people not as people at all, but as characters in a never-ending drama, that conditioning influences everything else: etiquette, ethics, the scripts that bind individuals into collectives. The old assumptions get replaced by another system of interaction—the kind that revolves around celebrity.

A celebrity is “a known individual who has become a marketable commodity,” in the historian Simon Morgan’s tart phrasing. Celebrities, consequently, are what Andy Warhol once called “half people”: flesh-and-blood humans who function, for their admirers and detractors, not as facts but as fictions. They also function as images to be read, analyzed, and judged.

Celebrities may hope for fame and reap its rewards, yet they must endure its costs too. That has always been part of the deal. But fame’s new accessibility, made possible by smartphones, has meant that anyone might become a star—and any star, too, might be dimmed.

Americans long accustomed to regarding celebrities as art to be appreciated or assessed are now putting their well-honed skills as critics to use in their interactions with the everyday people they encounter on, and sometimes off, the screen. They read body language. They look for revealing tweets and “likes” and Facebook posts. They ferret out those who are “sus” and make elaborate cases for why people are “MAGA-coded” or “lib-coded.” They praise those who seem, from a distance, “boyfriend-coded.” They identify people’s red flags, green flags, beige flags. They might accept the claims that people make on their own behalf. Or they might call them performative and flick them away.

The result is a type of unease that permeates even straightforward social interactions. A performance, traditionally, is the product of rehearsing, honing, and stress testing: a final draft, polished for public consumption. When life becomes a ceaseless performance, those standards of perfectionism can leak into any exchange—and anxiety, an activation of the body’s fight-or-flight response, might sink in. People navigate the world with brains meant to protect them from the fanged beasts of the savanna. Now our predators stalk us with their phones.

When the coronavirus pandemic officially ended—and when “social distancing” receded as a public-health-mandated survival tactic—many people kept wearing masks. They did so not as a defense against other people’s germs but as a defense against other people’s eyes. A common explanation was, I’m sick of being perceived.

Stage fright is mostly a fear of being looked at: all those eyes, gazing and judging. Performance anxiety causes the human nervous system to start turning on itself. In the body politic, this anxiety can work similarly—pushing people to turn inward.

Consider that in the months after vaccines made it possible for more and more people to emerge from seclusion, a new term began spreading on social media: FODA, short for fear of dating again. The hesitance that some people expressed was not based on a fear of exposure to the coronavirus. The threat, instead, was anticipatory awkwardness—people’s assumption that, over many months of social isolation, they had lost their capacity to conduct conversations that would be smooth and winning or, put another way, screen-worthy.

That kind of anxiety, whether it involves dating or other social interactions, can feed on itself. If you don’t interact with people regularly, each encounter can bring more pressure to “perform” well. The less you’re around other people, the less patient you might be of the foibles that can compromise a performance, whether your own or someone else’s. The desire for a stage environment that is under total control—in which every line sparkles, in which no awkward pauses occur, in which misspeaking and misunderstandings are violations—may be a rational response to the pressure to be forever “on.” The main character, after all, has one job: to put on a good show. But when the show never ends, the need to stage-manage doesn’t either. And that can be exhausting.

The sociologist Charles Horton Cooley, writing around the turn of the 20th century, described the rise of the “looking-glass self,” the tendency to perceive one’s self through other people’s reactions. Phone-based life has made Cooley’s concept literal—and immediate. Never before have we seen ourselves so incessantly. Never has self-consciousness about the way we present been so intense.

No wonder that, in this world of screens that double as inescapable mirrors, cosmetic surgery is on the rise in the United States. No wonder that, after a pronounced decline in use since their heyday, in the late 2000s, tanning beds are popular again—a trend driven by young people following the lead of influencers rather than doctors. (In a survey conducted by the American Academy of Dermatology in 2022, 28 percent of Gen Z respondents said that getting a tan was more important to them than protecting themselves from skin cancer.) No wonder that even the very young are experimenting with skin-care products for which they surely have no need: “Girls as young as 8,” the Associated Press reported in 2024, “are turning up at dermatologists’ offices with rashes, chemical burns and other allergic reactions to products not intended for children’s sensitive skin.”

These are kids doing what young people have always done—seeking guidance on how to look, and how to be, in the world. But they are getting their advice directly from influencer-marketers who act like friends yet stoke anxieties in the manner of frenemies.

Some reluctant performers, in response to the endless exposure, have been looking for ways to make celebrity optional again. Finstas—fake Instagrams—are candid accounts featuring images (selfies, typically) that are, by design, poorly lit, awkwardly angled, and otherwise stridently unphotogenic. The app BeReal gives users a two-minute window each day, at varying times, to post pictures of whatever they’re doing at that moment. “BeReal won’t make you famous,” the company said in 2022. “If you want to be an influencer you can stay on TikTok and Instagram.”

But the easiest way to avoid being perceived is to opt out of public settings altogether. TikTokers have adopted terms such as cocooning and bed rotting to describe not just being at home but purposefully staying at home as a soothing alternative to the demands of public exposure. A popular meme genre conveys the relief people claim to feel when an outing has been called off. (Me when my plans get canceled, these posts tend to say—followed by a GIF of a kid dancing or a video of Luther Vandross crooning, “It’s all right, it’s all right, ooh, baby, it’s all riiiiight.”)

As my colleague Ellen Cushing argued last year, Americans are experiencing a national “party deficit.” (One of the many bleak statistics she cited: On an average weekend or holiday in 2023, only 4.1 percent of Americans attended or hosted a social event, a 35 percent decrease from 2004.) Hanging out, that low-stakes and time-honored form of socializing, has recently become so endangered that, in 2023, the professor and critic Sheila Liming offered a book-length defense of its pleasures. These declines can be traced to many causes. One may be that gatherings can introduce new vulnerabilities to the people who attend them. In the smartphone era, parties and hangouts might as well be free-for-all movie sets, where cameras are always zooming in, and anyone in attendance risks being perceived.

Even love, in this era, gets conscripted into the show. Romantic partnerships have always been, to some extent, public-facing projects. Now they double as content. The workings of people’s hearts have data trails. Declarations of affection are one more thing that people “do for the ’gram.” Relationships might be “soft launched” on social media, offered up to followers who serve as audiences, reviewers, and focus groups. If the new product tests well—if the critics approve—it might be “hard launched” or made “Instagram official,” with handles tagged, swooning captions crafted, likes sought and won.

The language of the hard launch is, in one respect, a new version of an old story—the modern-day “going steady.” The crucial difference, though, is that the hard launch assumes the existence of a wide and hungry audience. When love is a performance made for others’ consumption, it, too, becomes subject to the standard cynicisms of the show: Is it a performance or not? True love or not? Love conquers all, unless love doesn’t get enough likes.

Stage fright—although it can’t be cured—can be managed. Smartphones and social media are doing what other transformative technologies have done: breaking old paradigms, leaving voids to be filled. These disruptions can seem destructive. They can also be opportunities.

Despite the forces pressing on them, people can find new ways to see one another over the distance. They might, as life’s performers, accept what they are offered, too often, on the commoditized internet: collectivity without community. But they might also look up from their screens, choose to write new scripts—and remember that stages are meant to be shared.

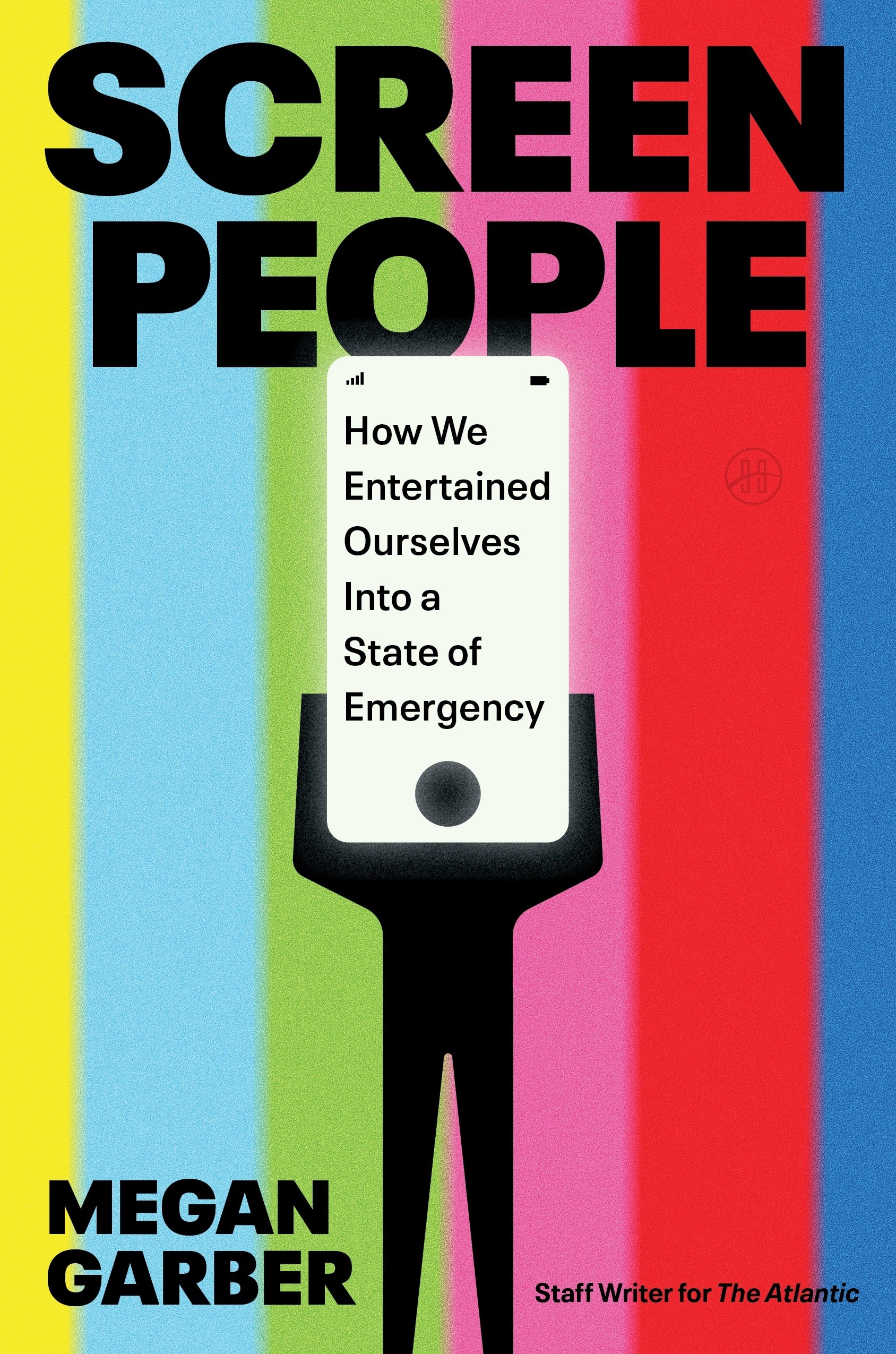

When you buy a book using a link on this page, we receive a commission. Thank you for supporting The Atlantic.